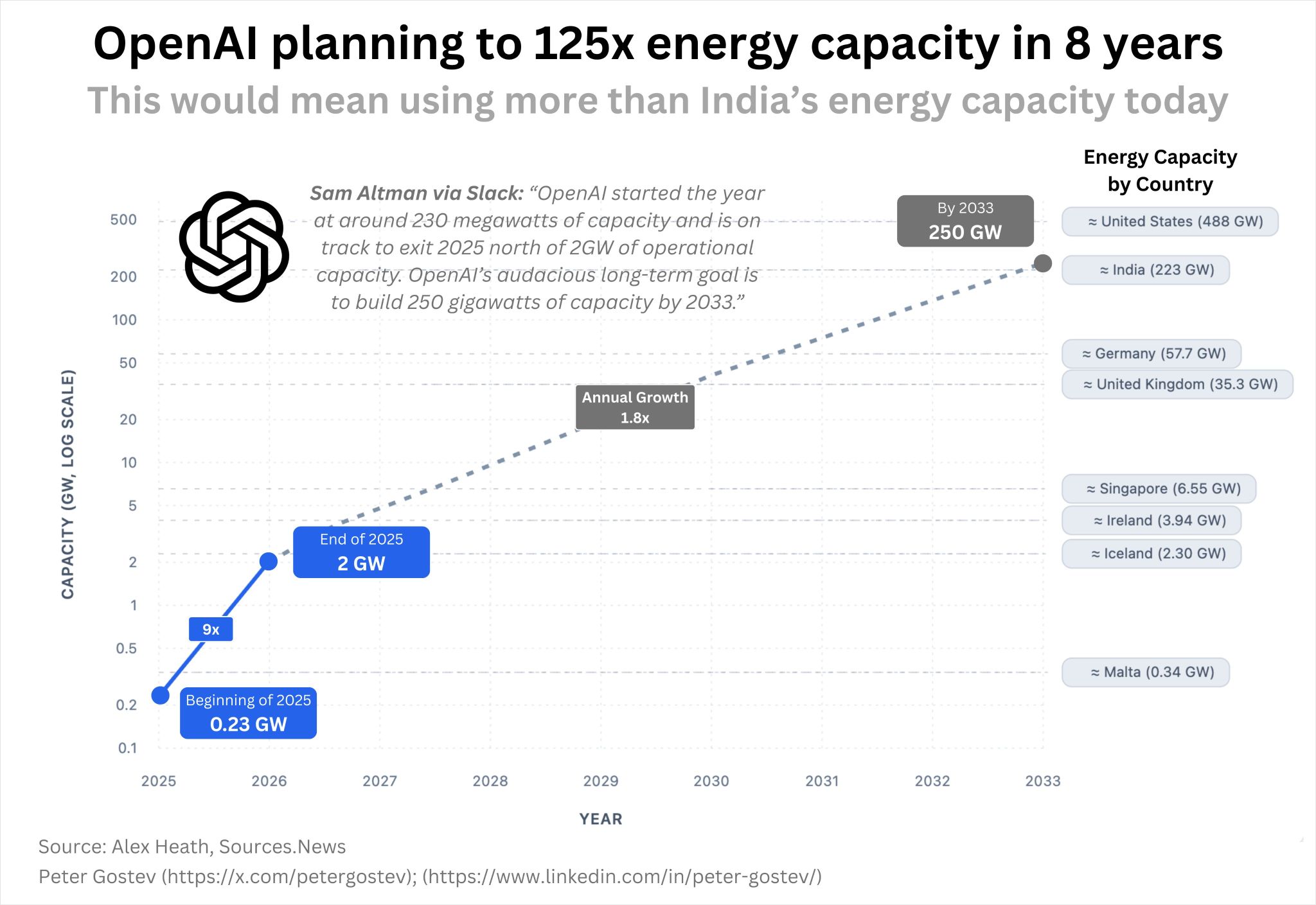

OpenAI is reportedly planning an energy expansion on the scale of a nation.

By 2033, it aims to reach 250 GW of capacity: more than India’s current grid (223 GW).

NVIDIA is backing this with $100 billion to roll out millions of Rubin GPUs across 10 GW of new data centres.

This is the part most people miss: whoever controls the energy infrastructure for AI essentially controls AI itself. The best algorithm is worthless without the power to run it.

For 50 years we optimised software to use less compute. Now we’re redesigning entire power grids to feed the compute. That’s not iteration, that’s inversion.

The constraint just became the strategy.